Modern businesses must track data across systems. However, comprehending the connections remains a challenge for many due to a lack of holistic visibility. Data observability facilitates accurate insights, enabling organizations to understand, monitor, and efficiently handle their data throughout the entire tech ecosystem. The blog piece will help you understand:

- The meaning of Data Observability

- How it is different from Data Quality and Data Monitoring

- Why it is important for New-Age Businesses

- How Informatica’s Modern Data Platform is helpful

What is Data Observability?

Picture a company ‘X’ where CDOs (Chief Data Officers) face the challenge of ensuring a smooth data flow across diverse consumer needs and growing data volumes. How will they ensure access to high-quality and trusted data? Enter data observability. With modern data observability tools, CDOs can gain real-time insights into data flows. This will, further, ensure transparency across data processes and quick identification of potential bottlenecks.

Simply put, data observability is the ability to observe, measure, and understand the flow of data within an organizational system. It involves the tracking of data as it moves through various processes, pipelines, and systems. The core objective is to ensure transparency and visibility into the complete data lifecycle. Data observability can also be defined as the extent of insight you possess into your data’s status at any given moment.

“The ability to holistically understand the state and health of an organization’s data, data pipelines, data landscapes, data infrastructures, and financial governance of the data. This is accomplished by continuously monitoring, tracking, alerting, analyzing, and troubleshooting problems to reduce and prevent data errors and downtime.”

Clarifying What Isn’t Data Observability

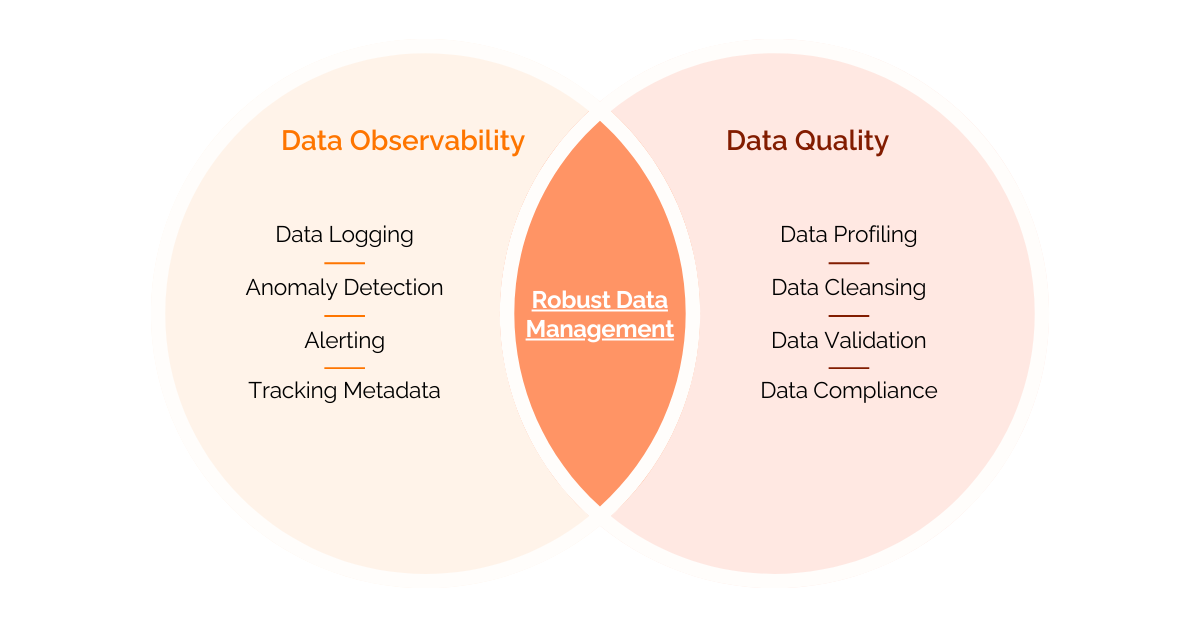

Distinguishing data observability from data quality and data monitoring is crucial, as they often get confused. Let’s explore their unique characteristics to gain a clearer understanding.

Data Observability v/s Data Quality

While data quality and data observability are related and complementary, they have distinct focuses and objectives. The objective of data quality is to ensure that data is accurate, consistent, reliable, complete, and meets the defined standards. The idea is to improve the overall quality of data for specific business use cases. Data quality is related to processes like data profiling, cleansing, validation, and adherence to data governance standards. On the other hand, data observability is directed towards understanding and monitoring the operational aspects of data.

It emphasizes providing transparency in the data pipeline, tracking data flow, and facilitating traceable data processes. Data observability is related to activities such as data logging, anomaly detection, alerting, and tracking metadata.

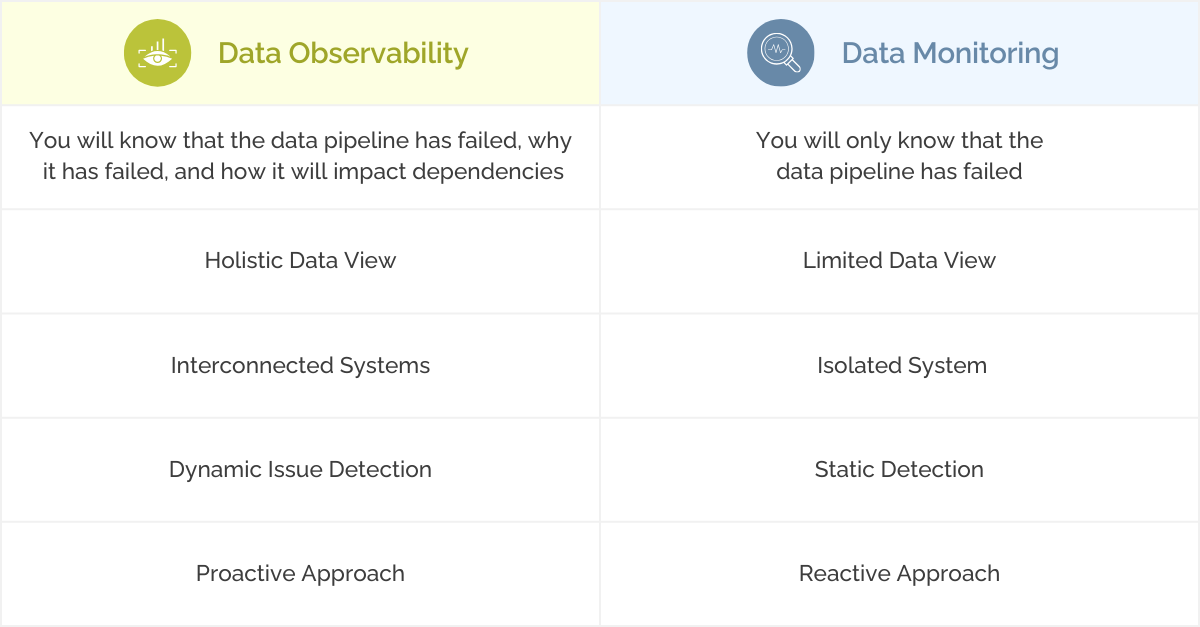

Data Observability v/s Data Monitoring

Both are related concepts, but they differ in their scope and purpose within the data management ecosystem. Data monitoring involves tracking and analyzing specific metrics within a data system. It identifies and reacts to issues like system downtime or error rates. On the other hand, data observability extends beyond traditional data monitoring. It focuses on gaining complete visibility into the entire data pipeline. Data flows and interactions are tracked in real-time, across various stages of the data lifecycle.

Did you know bad data can cost businesses between

$9.7 million and $14.2 million every year?

Why Data Observability Matters: Key Benefits

When we say that data observability involves understanding the visibility you have in your data, the term ‘visibility’ is measurable. It is measured by the close-ended questions a data team can answer. These questions may include inquiries like the status of ELT jobs, dependencies between tables and columns, user access to dashboards, and ensuring data quality and timeliness. Data observability is a proactive way of identifying, fixing, and resolving issues with both the data itself and the pipelines that handle it.

Data observability serves as the most effective safeguard against bad, inaccurate, or unreliable data. Imagine a scenario where a retail company ‘Y’ relies on a daily sales report generated from its data system. Without data observability, the sales team might not immediately notice if the report starts showing inaccurate figures.

Traditional methods make it difficult to identify such issues early on, and the business could unknowingly operate on flawed data. However, with data observability, the system continuously monitors the data pipelines and facilitates timely and accurate sales reports. This proactive approach prevents the sales team from working with misleading data and allows them to maintain trust in the information they use for decision-making.

Here are some of the Best Advantages of Data Observability:

Timely Issue Identification – Data observability allows businesses to detect errors, anomalies, and disruptions in data pipelines in real-time. This further enables them to intervene quickly and prevent the escalation of data issues. Such early detection minimizes downtime and ensures that critical data resources are consistent.

Enhanced Operational Efficiency – By providing a holistic view of data operations, data observability reduces the effort and time needed for troubleshooting. Furthermore, operational insights contribute to optimizing data processes and enhancing the overall workflow of data teams.

Risk Mitigation and Enhanced Compliance – One of the best aspects of data observability is that it provides a transparent overview of data lineage. It offers all the details related to its origin, transformations, and usage. This supports organizations in validating proper data utilization and adherence to regulatory standards.

Why Choose Informatica to Drive Data Observability

Ensuring data observability requires the integration of cutting-edge data quality solutions, automation processes, and data governance practices. Informatica – a global leader in Enterprise Cloud Data Management, provides a complete suite of tools that help create a strong foundation for enhanced data visibility and control. Businesses can elevate data observability with a comprehensive approach that involves monitoring, tracking, and alerting mechanisms to provide a nuanced understanding of their data’s overall health.

Let’s take a look at some of the best advantages offered by Informatica:

1. Accessible, High-Quality, and Trustworthy Data For All

High-quality data is necessary for effective data observability as it ensures accurate insights, reliable monitoring and issue detection in data pipelines, enabling businesses to make informed decisions with confidence and agility.

Leverage pre-built rules and accelerators with Informatica to allow seamless application of standardized data quality measures across diverse datasets from any source. Informatica’s Cloud Data Quality product enables you to effectively oversee the complete data quality workflow, irrespective of your organization’s scale, or the nature and quantity of data. Cloud Data Quality eliminates the need for additional IT coding or development.

The secure and reliable infrastructure allows you to prioritize operational excellence over investing in extra resources. Cloud Data Quality allows you to engage in iterative data analysis to gain deeper insights into the characteristics and well-being of your data. Furthermore, Informatica’s CLAIRE engine provides meta-data driven AI for Cloud Data Quality. It delivers intelligent suggestions for data quality based on the management of similar data.

2. AI Automation for Enhanced Productivity

Automation is important for achieving scalable data observability. By deploying automation processes, organizations can easily identify and address issues within data pipelines, ensuring a prompt response to maintain the reliability of their data infrastructure.

Informatica’s CLAIRE AI engine empowers administrators and operational professionals with data observability and automation. It streamlines manual tasks associated with security and infrastructure operations by as much as 60%. By leveraging CLAIRE’s AI copilot capabilities, organizations can ensure enhanced data observability, self-tuning, self-healing, intelligent scheduling, integrated orchestration, and automated resource allocation. Businesses using the CLAIRE solution can:

- Achieve a 50% reduction in data classification time

- Expedite data discovery by a remarkable 100 times

- Witness increased productivity by 20% or more

3. Data Governance and Intelligence at its Best

A strategic data governance framework ensures that organizational data is accessible, usable, integrated, and secured. It is important for effective data observability because it guarantees the delivery of high-quality data through data pipelines and data usage within established guidelines.

With Informatica’s cloud data governance, you can connect technical metadata with relevant business context and enable a unified view and understanding of your data. One of the best parts is that it ensures the consistency, accuracy, and reliability of your data through integrated cataloging, governance, and quality measures.

Furthermore, Informatica’s Cloud Data Governance and Catalog – an integral part of the Informatica Intelligent Data Management Cloud, offers predictive data intelligence to tackle data and analytics governance in the cloud. The tool seamlessly integrates data governance, data cataloging, and data quality functionalities. It automates the extraction of data insights, leading to enhanced data intelligence.

Quickly Implement Informatica’s Modern Solutions with LumenData

LumenData is a proud Platinum Partner with Informatica and the #1 Provider of SaaS-Based MDM Implementation Services. Our team has built a comprehensive range of accelerators that enable the migration of both your actual and reference data. We recently won the prestigious recognition as the Global Delivery Channel Partner of the Year 2023 at the Informatica CKO 2024.

Our partnership with Informatica has helped us to be at the forefront of preparing enterprises to master data capabilities and be future-ready.

Initiate a conversation today to discuss how we can facilitate a modern data journey for your business.

Reference Links:

Authors

Shalu Santvana

Content Crafter

Athira NS

Technical Lead